Privacera AI Governance Overview

Generative AI and large language models (LLMs) revolutionize enterprise operations and customer interactions. For true success, you must do it securely and responsibly with data privacy, security, and compliance. Revolutionize across your modern data, analytics, and AI enterprise estates with Privacera AI Governance (PAIG).

Built on the existing strengths of Privacera, PAIG combines purpose-built AI and LLMs to drive your dynamic, consistent, enterprise-wide security, privacy, and access governance.

Training data for generative AI models and embeddings are continuously scanned for sensitive data attributes, then easily tagged. You can leverage more than 160 prebuilt classifications and rules. Your organization can expand on these based on your unique requirements.

Fine-grained data-level controls are established based on your sensitive data discovery and tagging. Efficiently mask, encrypt, and remove sensitive data in your pre-training data pipeline.

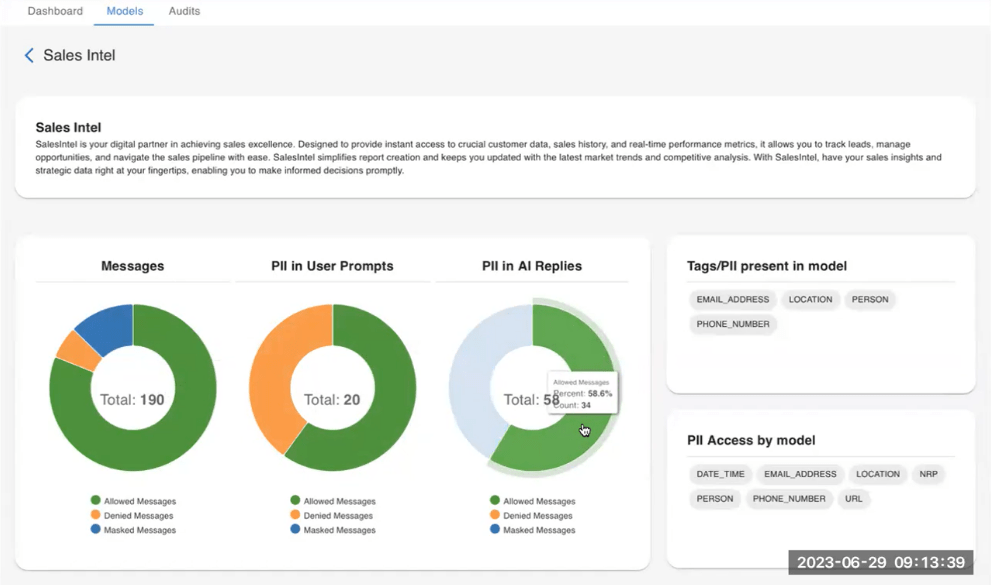

Real-time scanning of user inputs and queries for sensitive data elements. Apply appropriate privacy controls based on user identity, data access permissions and governance policies with Privacera’s AI Governance.

Real-time scanning for sensitive data elements in model responses. Apply appropriate controls based on user identity and data access permissions.

Continuous monitoring and collection of all model usage, user behaviors, responses, and access events into LLMs to drive analytics into usage, security, and risk patterns.

Govern your AI or lose more than you gain.